OpenAI on introduced GPT-5.4-Cyber, a fine-tuned version of its flagship GPT-5.4 model built for defensive cybersecurity work, and confirmed it will only be released to vetted security vendors, researchers, and verified individual defenders enrolled in the company's expanded Trusted Access for Cyber program. The launch came exactly one week after rival Anthropic announced Mythos, its own gated defense model under a controlled initiative called Project Glasswing, and it cements a shift in how the largest AI vendors plan to ship their most capable security systems.

The release is the clearest signal yet that frontier model providers are no longer comfortable letting their most capable cyber systems sit behind a single API key and a credit card. OpenAI is restricting GPT-5.4-Cyber on the principle that capability and access have to be calibrated together. The model lowers the refusal boundary for sensitive work like vulnerability research and binary reverse engineering, and the company has decided those capabilities require a verified human attached to them.

What GPT-5.4-Cyber actually does differently

The base GPT-5.4 model, which OpenAI rolled out earlier this year, was the first OpenAI release classified as "high" cyber capability under the company's internal Preparedness Framework. GPT-5.4-Cyber takes that base and fine-tunes it specifically for defensive workflows, with two changes that matter to security teams.

The first change is a relaxed refusal boundary. The standard model is trained to decline a wide range of requests that look like offensive security work, and that conservatism creates real friction for defenders who need to think like attackers in order to patch software. GPT-5.4-Cyber is more willing to engage with prompts about exploitation patterns, malware analysis, and offensive tooling when the user is verified.

The second change is binary reverse engineering, a capability the consumer-facing models do not expose at this depth. The cyber variant can take compiled software it has never seen the source code for, decompose it, and produce useful output about its function, vulnerabilities, and likely intent. That is the daily work of a malware analyst, and OpenAI is now offering it as a service to people the company can verify.

OpenAI also added new tiers to its Trusted Access for Cyber program, which it launched in with a few hundred initial testers. The company says it is now scaling to thousands of verified individual defenders and hundreds of teams responsible for protecting critical software infrastructure. The highest tier of access, where GPT-5.4-Cyber lives, requires additional authentication beyond standard enterprise verification.

Why this matters: the dual-use problem catches up

For most of the last three years, the assumption inside the AI industry was that you could ship a single frontier model to everyone with safeguards layered on top, and the safeguards would absorb the misuse risk. That assumption is breaking down in cybersecurity faster than in any other domain, because defensive and offensive capability look almost identical from the model's perspective.

OpenAI articulated the new logic directly in its announcement. Cyber capabilities are inherently dual-use, the company wrote, which means risk is not defined by the model alone. It depends on who the user is, what trust signals surround them, and what level of access they are given. Broad access to general models with safeguards can coexist with much more granular controls for higher-risk capabilities, but only if the verification layer is real.

"Broad access to general models with safeguards can coexist with more granular controls for higher-risk capabilities, supported by stronger verification, clearer signals of intent, and better visibility into use."

OpenAI, in its announcement of GPT-5.4-Cyber

That is a different model than the one OpenAI was operating under as recently as the GPT-5.2 release. Back then, the company added cyber-specific safety training and shipped the model widely. With GPT-5.3-Codex it added more safeguards and still shipped widely. With GPT-5.4 it began classifying the model as high-capability under the Preparedness Framework but still released it broadly with stronger filters. GPT-5.4-Cyber is the first release where capability and distribution are explicitly decoupled.

An analogy that explains the gating

The clearest way to think about what OpenAI is doing is to compare it to how the firearms industry sells optics. A retail customer can walk into any sporting goods store and buy a midrange rifle scope. A military procurement officer can buy a thermal weapon sight that is functionally restricted from civilian export. The optics are not technically different in kind, but the access path is. One is gated by verification, end-user agreements, and accountability; the other is not.

OpenAI's argument is that frontier cyber models need the same two-tier structure. The general public can use GPT-5.4 through ChatGPT and the API with the standard refusal boundary in place. Verified defenders can use GPT-5.4-Cyber with the boundary loosened, but only after they have proven who they are and accepted oversight conditions. The company's trusted access pathway is the verification layer that makes that two-tier model possible.

The Anthropic shadow over this release

OpenAI did not name Anthropic in its announcement, but the timing left no doubt. On , Anthropic unveiled Mythos, an unreleased frontier model being deployed under Project Glasswing exclusively to a handful of major partners for defensive cybersecurity work. Anthropic claimed Mythos had already discovered "thousands" of major vulnerabilities across operating systems, web browsers, and other widely deployed software during controlled testing.

That was an inflection point for the industry. For the first time, a frontier lab released a defense-only model with measurable real-world output, and made the case that the most capable cyber systems should be ring-fenced from general public access for safety reasons. Reuters reported that OpenAI's GPT-5.4-Cyber was widely seen inside the security research community as a direct response.

The Mythos announcement also drew a notable critic. Bank of England Governor Andrew Bailey said this week that he sees major cybersecurity risks emerging from the new Anthropic model, citing concerns that even a controlled rollout could be reverse-engineered or jailbroken in ways that would benefit attackers more than defenders. OpenAI's decision to ship a more conservative tier structure, rather than a single concentrated release, may be partly a reaction to that pushback.

What defenders actually get from this

The practical question for security teams is whether GPT-5.4-Cyber is meaningfully more useful than the general GPT-5.4 model for daily defensive work. OpenAI's pitch, embedded in the announcement, focuses on three workflows where the standard model creates the most friction.

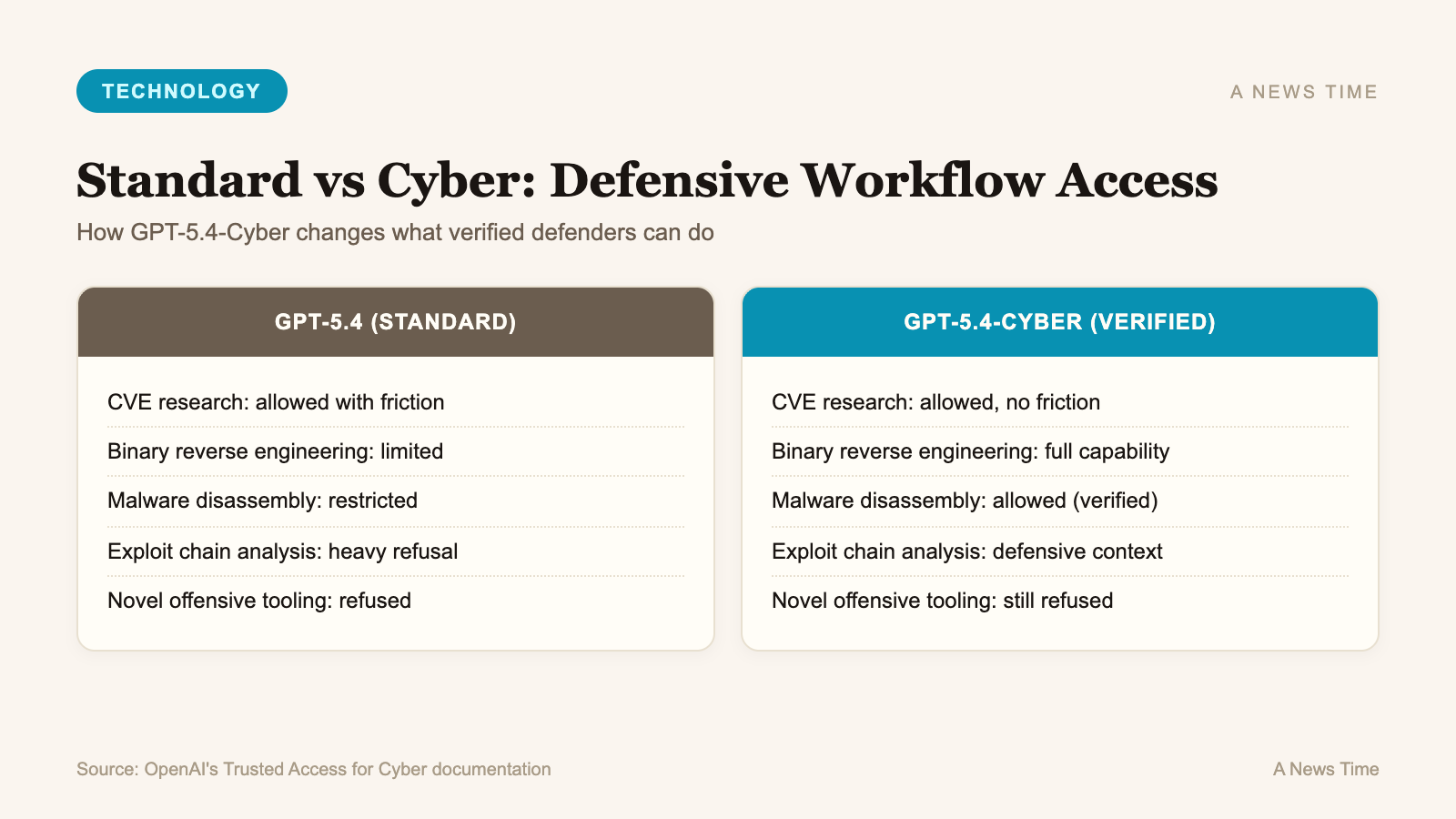

| Defensive Workflow | GPT-5.4 (Standard) | GPT-5.4-Cyber (Verified Tier) |

|---|---|---|

| Vulnerability research on known CVEs | Allowed with friction | Allowed, no friction |

| Binary reverse engineering of unknown samples | Limited | Full capability |

| Malware behavior analysis from disassembly | Restricted | Allowed with verification |

| Exploit chain explanation for patching | Heavy refusal | Permitted in defensive context |

| Generation of net-new offensive tooling | Refused | Refused |

The bottom row matters. OpenAI is not opening the door to the production of offensive tools that did not previously exist. The cyber model is meant to accelerate analysis of things that already exist in the wild, not to help anyone build novel weapons. That distinction is what the company is asking verified defenders to honor in exchange for access.

The scale OpenAI is targeting

Numbers from the announcement show how aggressively OpenAI plans to expand the program. The Trusted Access for Cyber program has scaled from hundreds of testers in February to a target of thousands of verified individual defenders and hundreds of organizational teams in the coming weeks. OpenAI also disclosed that its Codex Security automated vulnerability scanner, launched in private beta last fall, has contributed to over 3,000 critical and high-severity vulnerability fixes across the open source ecosystem since its limited release, alongside many more lower-severity findings.

Codex Security is also tied to a broader $10 million Cybersecurity Grant Program that OpenAI launched to fund defender-side work, plus open source security infrastructure. The company is positioning all of this as a single coordinated push to scale defensive capability faster than offensive capability scales, and to make sure that scaling does not depend on any one organization deciding centrally who counts as a legitimate defender.

That last point is rhetorical but consequential. OpenAI's announcement repeatedly emphasizes that the company does not want to be the entity that decides who can defend themselves. The verification-based model is meant to push that decision into a more automated, criteria-based process, with KYC and identity checks doing the gating instead of manual review by OpenAI staff. The company believes that approach scales; the open question is whether it scales fast enough.

What to watch next

The next few weeks will determine whether GPT-5.4-Cyber becomes a serious tool for the defender community or a curiosity that smaller security teams cannot actually access. The verification process is a real bottleneck. Enterprise customers can request trusted access through their OpenAI account representative, but individual defenders have to go through identity checks at OpenAI's cyber portal, and the highest tier requires additional authentication. If that process is too slow, GPT-5.4-Cyber will end up benefiting only the largest security vendors, the same ones that already have privileged access to most frontier models.

The other thing to watch is whether OpenAI's tiered approach holds up against the same jailbreak research that has historically chipped away at safety boundaries on its consumer models. A model with a relaxed refusal boundary is, by definition, more susceptible to being pushed further in unintended directions. OpenAI's announcement acknowledges this and says the cyber variant will come with stricter deployment controls, including limitations on Zero Data Retention configurations where the company has less visibility into how the model is being used. Those controls will be tested.

For now, the bigger picture is the one defenders have been arguing for since the GPT-4 era: that frontier AI cybersecurity capabilities are too consequential to ship as a single product to a single audience. CNET noted that GPT-5.4-Cyber appears to be OpenAI's structural response to that argument, and Anthropic's Mythos is the same response from a different direction. The question for the next release cycle is which other frontier labs follow, and whether the verification model becomes the new default for capabilities that sit in the dual-use gray zone. For more on how cybersecurity is changing under this new generation of models, see our coverage of the cyber retaliation surge tied to the Iran conflict and the broader Anthropic model leak story.

One thing is settled. The era of shipping frontier cyber models with nothing but a content policy and a hope is ending. What replaces it is still being negotiated, and OpenAI's release this week is the clearest draft of the new contract so far.

Frequently Asked Questions

Can the public access GPT-5.4-Cyber through ChatGPT?

No. GPT-5.4-Cyber is restricted to vetted security vendors, organizations, and individual defenders verified through OpenAI's Trusted Access for Cyber program. Standard ChatGPT and API users will continue to access the general GPT-5.4 model with normal safeguards in place.

How is GPT-5.4-Cyber different from the regular GPT-5.4 model?

It is fine-tuned to be more permissive on defensive cybersecurity tasks, including binary reverse engineering, vulnerability research, and malware analysis. The refusal boundary is lower for security-related prompts, but the model still refuses to generate net-new offensive tooling.

How do I get access to the Trusted Access for Cyber program?

Individual defenders can verify their identity through OpenAI's cyber access portal. Enterprises can request access through their existing OpenAI account representative. Higher-tier access, including GPT-5.4-Cyber, requires additional authentication beyond standard enterprise verification.

How does this compare to Anthropic's Mythos model?

Anthropic released Mythos on April 7, 2026 under its Project Glasswing initiative, deploying it to a small set of partner organizations exclusively for defensive work. OpenAI's approach is broader, scaling to thousands of verified defenders rather than a handful of partners, but both companies are restricting their most capable cyber models from general public access.

What is the Preparedness Framework?

It is OpenAI's internal classification system for evaluating model capabilities across domains including cybersecurity, biological risk, and persuasion. GPT-5.4 was the first release classified as "high" cyber capability under the framework, which triggered the additional safeguards that GPT-5.4-Cyber is built on top of.