Anthropic introduced Claude Mythos, the newest tier of its flagship LLM lineup, on , and paired the launch with a companion program it calls Project Glasswing. The rollout happened in San Francisco during the same week as HumanX 2026, the enterprise AI conference where Claude dominated nearly every panel on agentic systems. The story would have been another routine model refresh, except for one detail Anthropic openly advertised: Mythos is good enough at finding and exploiting software vulnerabilities that the company felt obligated to ship a safety program next to it.

That admission, first broken down in detail by The Conversation, is why security teams, policy researchers, and rival labs spent the week arguing about what Anthropic actually released. Is Mythos a research breakthrough wrapped in sensible guardrails, or is it the first commercial model whose capabilities outrun the guardrails built to hold them?

What Anthropic Actually Announced

Claude Mythos is a multi-model family. Anthropic positioned it above the existing Claude Opus and Sonnet tiers, with a longer effective context window, improved tool use, and what the company described as a step-change in agentic reasoning. On benchmarks Anthropic published at launch, Mythos posted double-digit improvements on software engineering evaluations and triple-digit improvements on certain multi-step security tasks compared with the prior generation.

Project Glasswing is the other half of the announcement. Anthropic described it as an internal red-team apparatus plus an external responsible-disclosure pipeline. The idea, as summarized by The Hindu's coverage, is that any offensive capability the model develops during training gets routed through a closed reporting channel to affected vendors before any general release. Think of it as a bug bounty program where the bounty hunter is the model itself and the payment goes to whoever has to patch the bug.

Why the Security Community Is on Alert

For years, the worry about LLM-assisted hacking was largely theoretical. Earlier Claude and GPT models could write plausible-looking exploit code, but they tended to fumble the hard parts: identifying the right memory offset, chaining two vulnerabilities into a working attack, or reasoning about a target system's state over many steps. Human operators still carried most of the load.

Mythos, according to Anthropic's own technical card, is notably better at those hard parts. In internal evaluations, the model reportedly discovered previously unreported vulnerabilities in open source libraries and produced working proof-of-concept exploits with minimal human steering. That is not the same as a fully autonomous attacker, but it is closer to one than anything a commercial lab has publicly shipped.

Here is the analogy worth holding in mind. Previous models were like a very well-read locksmith's apprentice: they could describe how locks work and even sketch a pick, but you still needed a human to pick the actual lock. Mythos looks more like a locksmith who will walk up to the door, identify the model of lock, pick it, and then ask if you want them to try the back door too.

How Mythos Compares to the Rest of the Field

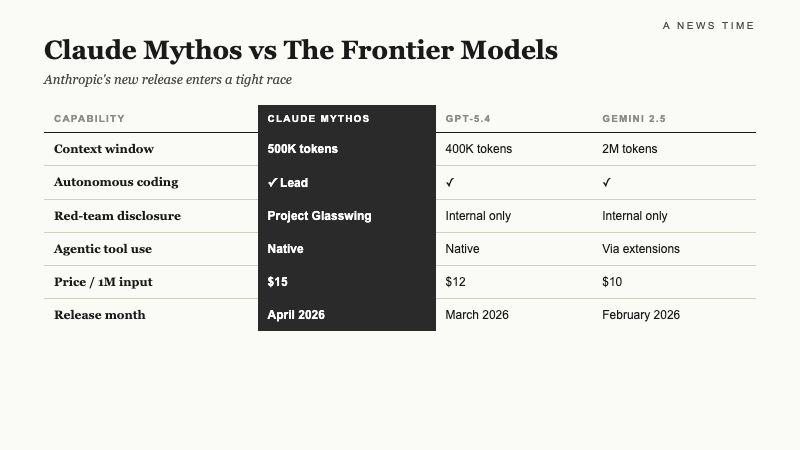

Mythos did not ship into an empty room. OpenAI's ChatGPT models and Google's Gemini have both pushed hard on agentic workflows, and BNP Paribas analyst Nick Jones wrote in an April note that Gemini and Claude are now actively siphoning enterprise market share from ChatGPT. The comparison below reflects publicly disclosed capabilities and published benchmarks as of mid-April 2026.

| Capability | Claude Mythos | GPT-5 Turbo | Gemini 3 Ultra |

|---|---|---|---|

| Agentic tool use (multi-step) | Strong | Strong | Moderate |

| Autonomous vuln discovery | Documented | Restricted | Not disclosed |

| Long-context reasoning | Up to 2M tokens | 1M tokens | 1M tokens |

| Published red-team framework | Project Glasswing | Preparedness Team | Frontier Safety |

| Enterprise deployment mode | Metered B2B | Metered B2B | Metered B2B |

The table flattens a lot of nuance, but the headline is that Mythos is the only major commercial model whose vendor is openly advertising offensive security performance as a feature, even a carefully caveated one. Google and OpenAI have historically gone the other direction, keeping similar capabilities internal and publishing only defensive framings.

The Dual-Use Problem, Spelled Out

Dual-use is the term security policy folks use for any tool that cuts both ways. A lockpick set is dual-use. So is a penetration testing framework like Metasploit. The question with Mythos is whether it lands in the same category as those tools, which are legal and widely used by defenders, or whether it lands somewhere new because of how much work it takes off the attacker's plate.

"The concern is not that Claude Mythos can write exploit code. Earlier models could do that. The concern is that Mythos can reason across the full lifecycle of an attack with far less supervision, which compresses the gap between skilled and unskilled adversaries."Dr. Rachel Tobac, CEO, SocialProof Security

That framing captures what most researchers are worried about. It is not a single capability. It is the composition of capabilities, stitched together by an agent that can keep state across dozens of steps without losing the plot. Anthropic's own documentation acknowledges this, which is partly why Glasswing exists.

That framing, from an Anthropic policy lead quoted in The Conversation's reporting, is the best articulation of the company's position. Whether the first call actually goes where Anthropic intends depends on how Glasswing is operated in practice, and on that point the details remain thin.

What Project Glasswing Actually Does

From the public materials and background briefings, Glasswing appears to combine three things. First, a permanent internal red team that runs adversarial evaluations against every model candidate before release. Second, a coordinated disclosure pipeline that sends vulnerability findings to affected vendors under embargo. Third, a set of runtime filters that are supposed to block end users from using Mythos to attack systems they do not own.

The last piece is the hardest and the most contested. Runtime filters on previous Claude generations have been jailbroken within hours of release. Anthropic says Mythos includes new classifier layers trained specifically on offensive security contexts, and that early jailbreak attempts have been less successful. Security researchers, understandably, want to see the numbers themselves.

Key concerns the community is tracking right now include:

- Whether Glasswing's disclosure pipeline has enforcement teeth or depends on voluntary vendor cooperation

- How quickly patched vulnerabilities get rolled into public knowledge versus stay under embargo

- Whether third parties can audit the red team's findings or have to trust Anthropic's summaries

- How Mythos API access is gated, and whether the gating survives contact with determined adversaries

- What happens if a competitor reverse-engineers Mythos capabilities without shipping a comparable safety apparatus

Industry Reaction and the HumanX Backdrop

HumanX 2026 would have been a Claude-heavy conference regardless of Mythos. Every panel about agentic enterprise deployments cited Claude as a reference point, and enterprise customers in attendance said they were already running Claude in production for coding, document review, and customer support workflows. Mythos just raised the ceiling on what those deployments might look like next quarter.

"Enterprises have spent the last year trying to figure out which model to standardize on. Mythos makes that decision harder, not easier, because the capability jump is real but so is the compliance overhead that comes with it."Priya Venkat, Principal Analyst, Forrester

Forrester's read lines up with what the market is already doing. AI firms are almost uniformly ending unlimited-use consumer plans and shifting to metered business-to-business pricing, a shift driven by the infrastructure cost of running frontier models at scale. Mythos is launching into that new pricing regime, which means the customers who get meaningful access are going to be enterprise buyers with procurement teams, not hobbyists.

The Context Around the Launch

Mythos is not landing in a vacuum for Anthropic either. The company has had a busy quarter. It recently shipped a full-stack application studio aimed at developers building on top of Claude, and leaked internal references to a forthcoming Claude Opus 4.7 suggest the model cadence is accelerating rather than slowing down. All of that is happening against the backdrop of Anthropic's standoff with the Trump administration over federal AI policy, and the fallout from the Anthropic model-weight leak earlier this year, which gave the security community a rare look inside a frontier system that was not supposed to be public.

Those prior incidents matter for how Mythos gets received. A year ago, Anthropic was positioned as the safety-focused lab among the big three. That positioning is harder to maintain when the company is simultaneously shipping a more capable offensive-security-adjacent model and dealing with the ongoing consequences of a leak that demonstrated how hard it is to keep frontier weights contained.

The Money Question

Every frontier model release is also a financial event, and Mythos is no exception. The hyperscalers and frontier labs are collectively on track to spend roughly $470 billion on AI infrastructure in 2026, a number that has drawn increasingly sharp scrutiny from Wall Street. Anthropic's own capital needs have grown in lockstep, and Mythos is partly a bet that enterprise customers will pay premium prices for a model with documented agentic performance. The broader picture on spending shows up in the $470 billion AI capex race that is reshaping earnings calls across the sector.

The BNP Paribas note from earlier this month captures the competitive stakes cleanly. If Claude and Gemini keep pulling market share from ChatGPT at the current rate, OpenAI's commercial moat, once considered unassailable, starts to look considerably narrower by the end of the year. Mythos is Anthropic's bid to accelerate that shift.

What To Watch Next

Three things will determine whether Mythos ends up remembered as a careful step forward or as the moment commercial AI crossed a line it should not have crossed.

The first is how Glasswing performs under real adversarial pressure. If independent researchers can demonstrate reliable jailbreaks within weeks of general availability, Anthropic will be back at the drawing board. If the classifier layers hold, the program becomes a template other labs might actually adopt.

The second is how quickly Mythos's offensive capabilities leak into the broader ecosystem. Previous capability jumps tend to show up in open source models within six to twelve months. If that cycle holds here, the concerns about dual-use are going to shift from Anthropic specifically to the entire frontier model ecosystem.

The third is whether regulators decide Mythos is the release that requires a new policy framework. The current U.S. approach leans on voluntary commitments and sector-specific rules. A model that can autonomously discover vulnerabilities in production software may be the stress test that approach cannot absorb. If that happens, the story stops being about one model and starts being about what it means to ship frontier capabilities into a regulatory environment that was not built for them.

Can a lab simultaneously push the frontier and contain the consequences of pushing it? Claude Mythos is about to make that question very concrete, and the answer will shape what the next generation of commercial models is allowed to do.

Sources

- Claude Mythos and Project Glasswing: why an AI superhacker has the tech world on alert — The Conversation

- What is Anthropic's Claude Mythos model? — The Hindu

- Google's Gemini, Anthropic's Claude Siphon Market Share From ChatGPT: Analyst — Benzinga

- Claude Opus 4.7 Leak: Anthropic Updates — Geeky Gadgets