Google on Wednesday, , introduced the eighth generation of its tensor processing units at Google Cloud Next, splitting the product line into two distinct chips for the first time. The TPU 8t is optimized for AI training. The TPU 8i is optimized for inference, the stage at which trained models serve user queries at scale. Both were co-developed with Broadcom over more than a decade and co-designed with Google DeepMind for Anthropic's Claude models, Google's own Gemini models, and the agentic AI workloads that now drive most hyperscaler GPU demand.

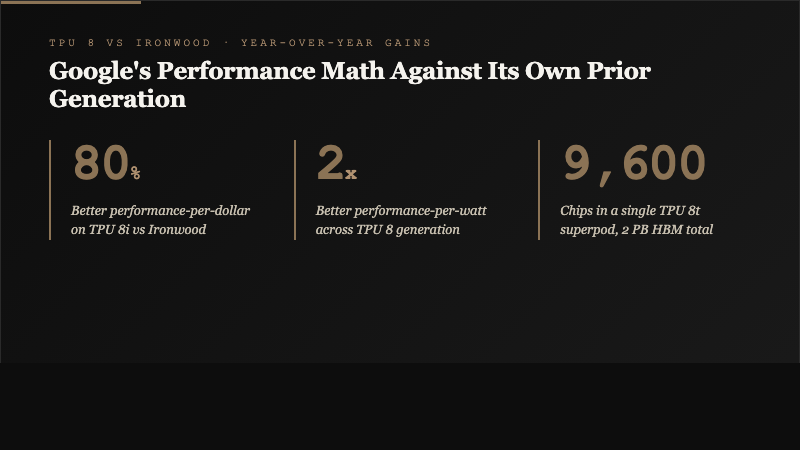

The headline performance claims are structural rather than marginal. TPU 8i delivers 80 percent better performance-per-dollar than Google's prior Ironwood TPU, meaning the same deployment budget now serves nearly twice the inference demand. A TPU 8t superpod scales to 9,600 chips interconnected through 2 petabytes of high-bandwidth memory, double the interchip bandwidth of Ironwood. Both chips run on Google's Axion CPU host for the first time, enabling system-level efficiency improvements beyond what a chip-only upgrade would deliver.

Why Google Split Training and Inference

The split of TPU 8 into separate training and inference chips is the most strategically significant design choice in the release. Through the first seven generations, Google's TPUs were architected as unified silicon that handled both workloads. That design worked when AI training dominated the capex budget and inference was a secondary concern. In the agentic AI era, inference has overtaken training as the dominant workload, and the economic incentive to optimize inference separately has grown faster than an integrated design can accommodate.

Google's own framing is that agentic AI operates "in continuous loops of reasoning, planning, execution and learning" that place a "new set of demands on infrastructure." The company determined that separate, specialized chips would deliver better results than a single unified design. TPU 8i was specifically built to handle larger and more complex workloads while adapting to the evolving capabilities of AI models in production deployments.

"Several years ago, we anticipated rising demand for inference from customers as frontier AI models are deployed in production and at scale."

Google engineering blog on the TPU 8 launch, April 22, 2026

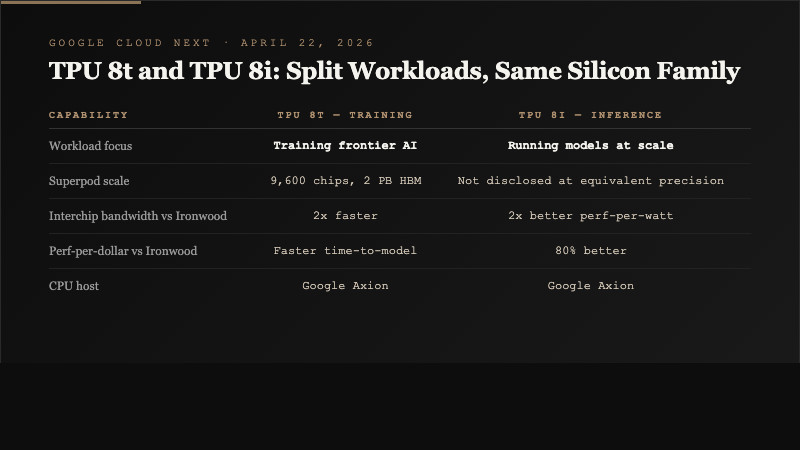

| Capability | TPU 8t (Training) | TPU 8i (Inference) |

|---|---|---|

| Workload focus | Training frontier AI models | Running AI models at inference scale |

| Superpod scale | 9,600 chips, 2 PB HBM | Not disclosed at equivalent precision |

| Interchip bandwidth vs Ironwood | 2x | 2x better perf-per-watt |

| Perf-per-dollar vs Ironwood | Faster training time-to-model | 80% better |

| CPU host | Google Axion | Google Axion |

| Available to cloud customers | Later in 2026 | Later in 2026 |

The Ironwood Comparison Matters

Ironwood was Google's seventh-generation TPU and the reference point the company is using for TPU 8 performance claims. The 80 percent performance-per-dollar improvement on TPU 8i and the 2x improvement in performance-per-watt are substantial generation-over-generation gains. They also position Google to argue to cloud customers that its TPU-based infrastructure is increasingly competitive with NVIDIA GPU-based alternatives on a total-cost-of-inference basis.

The training chip's specs tell a parallel story. The TPU 8t superpod at 9,600 chips with 2 petabytes of high-bandwidth memory is the largest training cluster Google has announced as a single interconnected unit. Double the interchip bandwidth of Ironwood means data movement between chips is no longer the binding constraint it was on prior generations, which matters for the largest frontier models where model state is distributed across many chips simultaneously.

Storage access has also been accelerated in TPU 8t. Google said the chip can "access storage faster," a terse disclosure that matters because storage-to-compute data movement is often the hidden bottleneck in training runs that exceed on-chip memory capacity. The combination of higher interchip bandwidth plus faster storage access means larger models can be trained closer to theoretical peak performance rather than waiting on data movement.

The NVIDIA Dynamics

Google is a large NVIDIA customer. The company confirmed at Cloud Next that it will be among the first cloud providers to offer NVIDIA's upcoming Vera Rubin AI platform later this year. The Vera Rubin offering is positioned alongside, not instead of, the TPU 8 lineup. That duality is consistent with Google's public stance that its TPUs are not a replacement for NVIDIA hardware but rather a complementary option for workloads where the TPU architecture is economically advantageous.

Unlike NVIDIA, Google does not sell its TPUs directly to external customers. The chips are available to enterprise customers only through Google Cloud. Anthropic, which uses TPUs to train and run Claude models, is the most visible external user. The Broadcom partnership is structural: Google and Broadcom co-design the silicon and have collaborated on every TPU generation going back more than a decade.

"With the improvements, the inference chip's performance-per-dollar is 80% better compared with the previous Ironwood TPU, meaning users can meet nearly twice the demand at the same cost."

Google engineering blog, April 22, 2026, as reported by MarketWatch

For NVIDIA, the TPU 8 release is a competitive signal but not a near-term threat. NVIDIA's market position in AI training remains dominant, and its Rubin platform extends that dominance into 2027. The inference market is more competitive. NVIDIA has been working to improve its inference capabilities, including through a nonexclusive licensing agreement with inference-chip maker Groq in December 2025, but whether NVIDIA retains the same competitive position in inference as it holds in training is an open question that TPU 8i directly pressures.

The Power Management Story

Google disclosed that TPU 8 chips integrate power management into the silicon itself, adjusting power draw dynamically based on workload demand. That integration is the main driver of the 2x performance-per-watt improvement claim. In a data-center context where power availability is now the binding constraint on AI buildout rather than silicon availability, performance-per-watt is arguably more economically important than performance-per-chip.

The system-level co-design with the Axion CPU host compounds that power efficiency. A CPU host in an AI accelerator system manages data flow, task scheduling, and model orchestration. When the CPU host is architecturally paired with the accelerator, as Axion is with TPU 8, the system can operate at higher sustained utilization than when accelerators are paired with generic CPU hosts. Google said that co-design produces "efficiency on a system level, rather than just on a chip level."

The Agentic AI Positioning

Google's framing of TPU 8 as an "Agentic Era" chip is a strategic bet on the next phase of AI deployment. Agentic AI, in industry usage, refers to AI systems that complete tasks with minimal human prompting by chaining together reasoning, planning, tool use, and learning across multiple steps. The compute profile of agentic workloads differs from chatbot-style inference: the models run for longer, make more tool calls, and require lower-latency response at each step of the chain.

TPU 8i's design addresses the specific pain points of agentic inference. The "waiting room effect" that Google cited as motivating the TPU 8i redesign refers to queue delays that accumulate when AI model usage spikes. Eliminating those delays matters more for agentic workflows than for one-shot inference because agentic chains are only as fast as their slowest step. The chip's performance-per-dollar improvement flows directly into the economics of scaling agentic deployments.

What to Watch

The first data point will be the actual general-availability schedule for TPU 8t and TPU 8i in Google Cloud. Both chips are expected to reach cloud customers later in 2026. The second will be published benchmarks from Anthropic or other major TPU users. Independent benchmarks, particularly ones that compare TPU 8i inference against NVIDIA H200 or Blackwell-class GPUs on the same workloads, will determine whether the 80 percent performance-per-dollar claim holds up outside Google's controlled comparisons.

The third signal is whether additional external customers beyond Anthropic adopt TPU 8. Google's business model keeps TPU sales inside Google Cloud rather than selling the silicon directly, but enterprise customers can still vote with their workloads. If TPU 8 wins meaningful share of inference traffic from customers who previously ran on NVIDIA-based cloud infrastructure, Google will have validated the architectural bet. If adoption stays narrow, the competitive landscape will consolidate back around NVIDIA's dominant position.

For related coverage, see our reporting on TSMC's A13 node that will fabricate next-generation AI accelerators, on Google's parallel Marvell-partnered custom chip program, and on NVIDIA's Vera Rubin roadmap.

Sources

- Google debuts two custom chips in latest bid to challenge Nvidia's dominance - MarketWatch

- Google Steps Up Its Long Running Challenge to Nvidia With New AI Chips - Barron's

- Google TPU 8t vs Nvidia: 80% better price UAE AI - TBreak

- Google to launch new AI chips with separate training, inference - Tech in Asia