Alphabet's Google is in talks with Marvell Technology to co-develop two new chips aimed at running AI models more efficiently, The Information reported on Sunday, , citing two people with knowledge of the discussions. According to the report, one chip is a memory processing unit designed to work alongside Google's existing tensor processing units, and the second is a next-generation TPU built specifically for running AI models. The companies aim to finalize the memory processing unit's design as soon as next year before handing it off for test production, the sources told The Information. Google and Marvell did not respond to requests for comment.

The talks matter for three reasons at once. They extend Google's multi-year effort to build a credible alternative to Nvidia's dominant GPU stack. They hand Marvell a marquee design win in a sector where every new AI accelerator program looks like a valuation catalyst. And they sharpen a specific architectural bet that most hyperscalers are quietly running in parallel: that the next performance leap in AI systems comes from purpose-built memory components, not just faster compute.

What Google Is Actually Trying to Build

The memory processing unit (MPU) in the reported design is the more interesting chip of the two. AI workloads, particularly inference at production scale, are increasingly memory-bound rather than compute-bound. Moving model weights and attention states between high-bandwidth memory and compute cores costs energy, time, and silicon area. A dedicated memory processor that sits between Google's TPUs and the memory subsystem could offload data movement, compression, and caching operations that currently burn a meaningful share of the power budget.

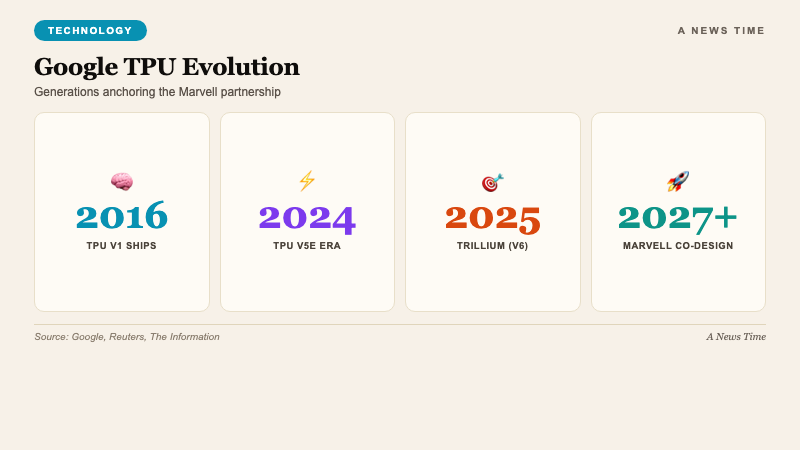

The second chip is a new generation of Google's tensor processing unit. Google has been shipping TPUs since 2016 and has used them internally to train and serve Search, Gmail spam filtering, Translate, and the Gemini model family. What the new TPU design adds, per The Information's framing, is purpose-built optimization for running models rather than training them. Inference is where Google's cloud margin case lives, because serving billions of queries per day against a frozen model requires different tradeoffs than the high-flops training pipelines that dominated the first TPU generations.

| Generation | Year | Primary workload |

|---|---|---|

| TPU v1 | 2016 | Inference |

| TPU v2 / v3 | 2017-2018 | Training + inference |

| TPU v4 | 2020 | Large-scale training |

| TPU v5e / v5p | 2023-2024 | Efficient inference / training |

| Trillium (v6) | 2025 | Gemini-scale training |

| New TPU w/ Marvell | 2026-2027 (projected) | Model serving |

A Marvell partnership is a departure from Google's recent custom-chip playbook. Broadcom has been Google's primary TPU co-design partner since the TPU v4 generation, and the two companies have collaborated on packaging, interconnects, and networking silicon. Bringing Marvell into the equation suggests either a deliberate diversification of supply-chain risk, a specific Marvell capability Broadcom cannot match on timeline, or both.

Why Marvell Shares Responded

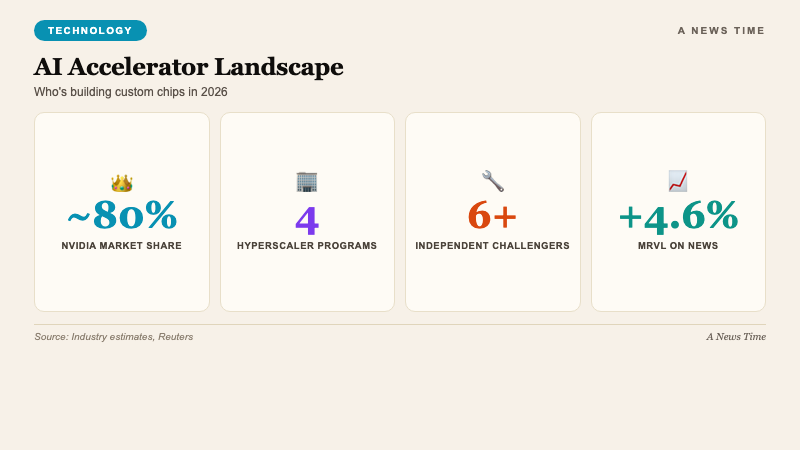

Marvell shares gained roughly 4.6% in trading on , the session after the report broke. The move is not just about a single design win, though that in itself is material given the revenue profile of hyperscaler AI chips. The broader story for Marvell is that it has spent the past two years repositioning itself as a data-center silicon specialist after divesting legacy consumer and networking businesses. AWS, Microsoft Azure, and Meta have all been reported as Marvell partners on custom AI silicon programs.

"Google has been pushing to make its TPUs a viable alternative to Nvidia's dominant GPUs. TPU sales have become a key driver of growth in Google's cloud revenue as it aims to show investors that its AI investments are generating returns."

Reuters, April 19, 2026

The Google-Marvell tie-up would add a fourth hyperscaler to that list, if confirmed. For analysts covering Marvell, the value of a Google program is roughly proportional to the scale of TPU deployment in Google Cloud plus Google's internal consumption. Both numbers have been accelerating. TPU sales have become one of the cleanest growth drivers in Google Cloud's recent earnings commentary, with Alphabet executives citing AI-related revenue as the primary reason the segment has been narrowing its margin gap with AWS and Azure.

What This Actually Threatens for Nvidia

The short version: not much in the next 18 months. Nvidia's GPU dominance is anchored by the CUDA software stack and by a 10-year head start on datacenter-grade GPU architectures. A new TPU plus MPU pairing, co-designed with Marvell, would ship in test quantities in 2027 at earliest, production volumes likely 2028. By then Nvidia will have shipped at least two more generations of Blackwell Ultra successors.

The longer-term version is more interesting. Google's explicit strategy, visible across the last three TPU generations, is to make TPUs the default for Google Cloud AI workloads while keeping Nvidia GPU availability as an option for customers with existing CUDA investments. The higher the share of Google Cloud AI revenue that runs on TPUs, the less Google has to pay Nvidia per AI-workload dollar. Marvell joining the supply chain adds leverage to that negotiation, both operationally and commercially.

The Google-Meta collaboration reported in Reuters in December 2025, which centered on building open-source software tooling to reduce CUDA lock-in, is the software counterpart to the Marvell hardware partnership. Neither move is a frontal assault on Nvidia. Both are moves to make the long-term Google stack self-sufficient in a way that preserves optionality.

The Industry Context

The Marvell talks are landing in the middle of what has become the most active US semiconductor corporate calendar in a decade. Cerebras filed to go public on . SpaceX, Anthropic, and OpenAI are preparing their own public listings, according to The New York Times. Bloomberg reported on that China's cheap AI models are creating new winners in Chinese tech stocks, including chip designers less constrained by US export controls.

The AI chip market is no longer a binary Nvidia-versus-everyone conversation. It is becoming a stratified landscape with hyperscaler in-house programs (Google TPU, AWS Trainium, Meta MTIA, Microsoft Maia), independent challengers (Cerebras, Groq, SambaNova, Tenstorrent), Nvidia's continued market leadership, AMD's catch-up efforts, and China's domestic substitution programs. The Google-Marvell partnership, if it ships on roughly the reported timeline, fits inside that broader multi-vendor reality.

What Still Has to Happen

Three things need to go right for the reported partnership to matter in the market. First, Marvell and Google need to finalize the MPU design and hand it to test production on the reported timeline. Silicon design cycles slip. Every hyperscaler AI program that has shipped on time is the exception, not the rule. Second, Google's TPU software stack needs to keep compiling models as efficiently as it does on Trillium. Any performance regression in the new TPU generation undermines the architectural argument for it. Third, the MPU needs to deliver a measurable energy-per-inference improvement at realistic batch sizes. The memory-processing approach is theoretically compelling. The empirical evidence will come from benchmarks on production workloads.

None of those conditions are trivial. All of them are achievable on the reported schedule if the engineering teams execute. The industry will learn which direction this tilts over the next 18 months as first silicon comes back from test production.

Frequently Asked Questions

What are Google and Marvell building together?

Per The Information's April 19 report, they are co-developing two chips: a memory processing unit designed to work with Google's existing tensor processing units, and a new-generation TPU built specifically for running AI models. The design work on the memory processing unit is targeted to finalize as soon as next year.

Why is Google partnering with Marvell on AI chips?

Google has been pushing to make its TPUs a viable alternative to Nvidia's GPUs, with TPU sales becoming a key growth driver for Google Cloud revenue. A Marvell partnership adds a second major silicon design partner alongside Broadcom and diversifies the supply chain.

Does this threaten Nvidia's GPU dominance?

Not immediately. The reported chips would not reach volume production until 2028 at earliest. Nvidia's CUDA software stack remains the dominant AI development ecosystem. The longer-term question is how much of Google Cloud's AI workload shifts from Nvidia GPUs to custom silicon as the new chips ship.

How did Marvell stock react?

Marvell shares gained roughly 4.6% on April 20 following the report. Analysts interpret the reported partnership as a positive incremental addition to Marvell's custom data-center silicon business, which already includes work with AWS, Microsoft, and Meta.

What is a memory processing unit?

A memory processing unit is a chip designed to handle data movement, compression, and caching operations between compute cores and memory subsystems. In AI workloads, memory bandwidth and latency are often the bottleneck rather than raw compute, making dedicated memory processors a meaningful architectural lever for energy and performance efficiency.

What to Watch

The next confirmation point is whether Google officially acknowledges the partnership on its next earnings call, or whether either company surfaces technical details at a hardware conference in the coming months. Design specifics on the memory processing unit, in particular, will be the most information-rich indicator of how aggressive the architectural departure is. For Marvell, the pattern to watch is how many hyperscaler design wins it can add over 2026 as the industry's custom silicon programs keep multiplying.