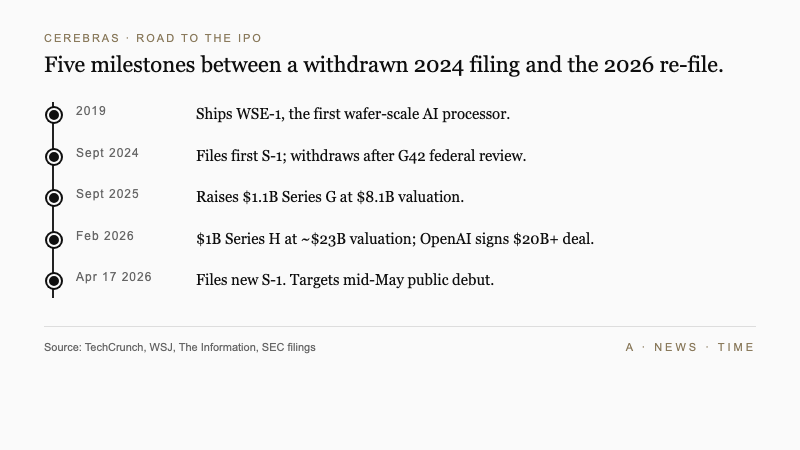

Cerebras Systems filed an S-1 registration statement with the SEC on , resubmitting a bid to go public that the San Francisco chip maker first attempted and abandoned in 2024. Chief executive Andrew Feldman is aiming for a mid-May 2026 market debut on the strength of $510 million in 2025 revenue, a multibillion-dollar supply agreement with OpenAI, and a deal to deploy Cerebras chips inside AWS data centers.

The filing lands in a narrow window. Cerebras is selling itself to public investors as the fastest credible alternative to Nvidia for training and running the largest artificial intelligence models, at a moment when AI infrastructure spending shows no signs of slowing and the IPO pipeline for tech companies is thickening behind it.

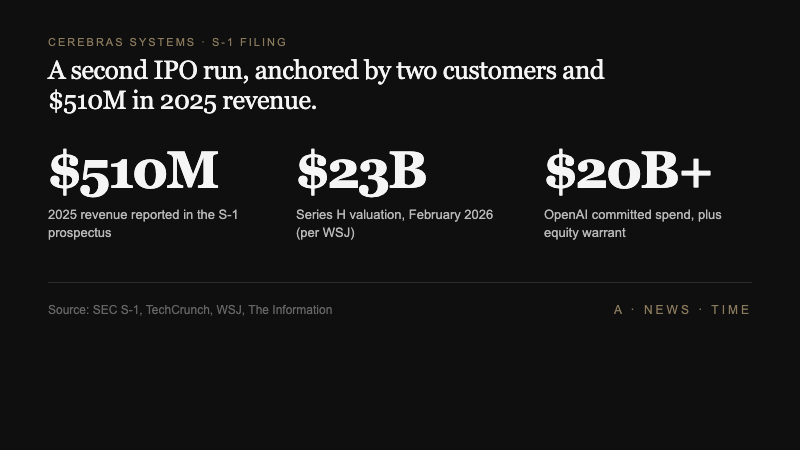

The numbers Cerebras is bringing to the S-1

The top line is straightforward. Cerebras reported $510 million in 2025 revenue and a GAAP net income of $237.8 million, according to the prospectus. Back out a handful of one-time items and the company was running at a non-GAAP net loss of $75.7 million, which is about what you would expect from a semiconductor startup still scaling a product as expensive to manufacture as a wafer-sized chip.

The private-market anchor for those numbers is a pair of late-stage rounds. Cerebras closed a $1.1 billion Series G in September 2025 at an $8.1 billion post-money valuation, followed by a $1 billion Series H in February 2026 that the Wall Street Journal reported valued the company at roughly $23 billion. The IPO prospectus does not yet disclose a target raise or a price range, which is standard for an initial S-1.

The path to this filing ran through Washington. Cerebras first tried to list in 2024, but the process stalled after federal regulators launched a review of an investment from G42, the Abu Dhabi-based technology conglomerate. The company withdrew that filing rather than wait it out. It did not waste the time in between.

The two deals that changed the math

Two customer agreements did more to reshape Cerebras' story than any fundraising round. The first is with AWS. Amazon will integrate Cerebras CS-3 systems, powered by the third-generation Wafer Scale Engine, into its global cloud footprint as an alternative to Nvidia-based training and inference clusters. That gives Cerebras an enterprise sales channel it could not build alone and a direct path to customers who buy compute through Amazon rather than by the rack.

The second deal is larger, and weirder. In a filing detail first reported by The Information, OpenAI committed to spending more than $20 billion on Cerebras chips and received a warrant to purchase Cerebras stock in return. The structure matters: OpenAI is both a customer and a beneficiary of Cerebras' eventual public-market upside, which aligns the two companies far more tightly than a normal supply contract would.

Feldman is not being subtle about what that customer win means.

Obviously, [Nvidia] didn't want to lose the fast inference business at OpenAI, and we took that from them.

Andrew Feldman, CEO of Cerebras Systems, speaking to The Wall Street Journal

That is the story Cerebras wants investors to buy. It is also, in a real sense, the entire story. Remove OpenAI and AWS from the filing and the company's revenue base looks far more concentrated than a typical semiconductor supplier's. That concentration is both the reason the IPO is credible and the single biggest risk buried in the S-1.

Why wafer-scale chips are a different kind of bet

Cerebras is not competing with Nvidia on Nvidia's terms. Most AI accelerators, including Nvidia's H100 and the newer Blackwell line, are discrete chips that get wired together into clusters. Training a large model across that architecture means constantly shipping data between chips over networking fabric, which becomes the bottleneck as models get larger.

The Wafer Scale Engine takes a different route. Instead of dicing a silicon wafer into hundreds of small chips, Cerebras keeps the wafer whole and treats it as a single processor. A Cerebras WSE-3 has roughly 900,000 cores and sits on a wafer the size of a dinner plate. Think of it less as "a bigger chip" and more as "a single-room server where the networking is replaced by copper traces that are millimeters long instead of cables that are meters long."

That design is expensive to manufacture and yields fewer units per wafer, but it removes a class of communication overhead that slows down the largest AI workloads. For inference, the phase where a trained model actually answers user queries, that latency advantage is what Feldman is selling.

| Milestone | Detail | Why it matters |

|---|---|---|

| Series G (Sept 2025) | $1.1B at $8.1B valuation | Bridged the withdrawn 2024 IPO |

| Series H (Feb 2026) | $1B at ~$23B valuation | Set the private-market anchor for public pricing |

| AWS integration deal | CS-3 systems in Amazon data centers | First scalable enterprise sales channel |

| OpenAI agreement | $20B+ spend + equity warrant | Cornerstone customer and aligned upside |

| 2025 revenue | $510 million | The number public investors will anchor on |

What Nvidia still has that Cerebras does not

Feldman's quote about taking the fast-inference business at OpenAI is narrowly true and broadly misleading in the same sentence. Cerebras did win a major inference workload, which is the single biggest customer validation in the company's history. Nvidia still runs almost everything else.

CUDA, Nvidia's software layer, is the reason most AI engineers do not have to think about the underlying hardware. That developer base is not switching to a new architecture unless the performance gap is enormous and the tooling is mature. Cerebras has invested in its own software stack, and the filing leans on customer testimony about ease of deployment, but the platform advantage Nvidia built over a decade does not evaporate because one customer moved one workload.

The broader AI chip market is also more crowded than the Cerebras narrative suggests. CNBC's coverage of the filing noted that Groq, SambaNova, and a growing roster of hyperscaler-designed silicon all compete for the same inference budgets Cerebras is chasing. And Broadcom's multiyear custom-chip work with Google and Anthropic shows that the biggest AI buyers are building around Nvidia rather than simply switching to a single alternative.

Stacy Rasgon, a semiconductor analyst at Bernstein, has argued for most of the past year that the real question for Nvidia challengers is not whether they can ship faster hardware on a narrow benchmark, but whether they can hold enough of the software ecosystem to keep engineers productive across a portfolio of workloads. On that second question, Cerebras is earlier in its journey than the IPO timing suggests.

The mid-May window, in context

Cerebras is not entering a quiet market. The New York Times reported that the prospectus arrived alongside public-market preparations at SpaceX, Anthropic, and OpenAI itself, in what is shaping up to be the most concentrated wave of tech listings since 2020. For Cerebras, being first through the door has an obvious upside. It also means the company will set the tone for how public investors price a new generation of AI-adjacent businesses, and a weak debut could drag the entire queue with it.

The geopolitical backdrop is less quiet still. The AI trade has been hit hard by the Iran conflict, with mega-cap chip names correcting sharply in the weeks before the Strait of Hormuz reopened. Cerebras is betting the recovery holds through its pricing window, and that investors who sat out the correction are ready to buy into AI hardware again.

Against a funding environment where Anthropic is fielding bids at up to $800 billion and OpenAI's latest round landed at levels most public-market chip companies would envy, a mid-May Cerebras debut has plenty of comparable pricing to lean on. Whether those comparables survive contact with daily trading is the first question the filing will actually answer.

What to watch next

Three things will tell you whether this IPO works. First, the pricing range when Cerebras files its amended prospectus, typically two to three weeks before the listing. A number well above the $23 billion private mark signals that banks see durable demand beyond the two anchor customers. A number at or below it means the public market is discounting the concentration risk.

Second, the customer disclosure. The filing reveals the existence of the OpenAI and AWS deals. What the final prospectus spells out about their revenue contribution, minimum commitments, and termination clauses will determine how Wall Street models the next two years.

Third, what Nvidia does next. Jensen Huang's company has not needed to compete on price, but a successful Cerebras debut changes the narrative for enterprise buyers who have been told, correctly, that there was no credible alternative. If Nvidia responds with a sharper inference product or pricing that narrows the gap, the Cerebras pitch gets harder. If it does not, the IPO becomes a template that SambaNova, Groq, and the next wave of challengers will follow.

Feldman's company has done the work to get to this filing. What the market does with it in the next six weeks is a separate question, and a more interesting one. Does the wafer-scale bet clear the public-market bar, or does Cerebras become the first cautionary tale of the 2026 AI IPO wave?

Frequently Asked Questions

When is the Cerebras IPO expected?

Cerebras has told reporters the offering is planned for mid-May 2026, roughly three to four weeks after the April 17 S-1 filing. The amended prospectus with a price range will typically arrive a week or two before pricing.

What is the Wafer Scale Engine?

The WSE is a processor built on a single uncut silicon wafer, roughly the size of a dinner plate, with around 900,000 cores. It is designed to eliminate the networking overhead that slows down large AI workloads running across clusters of smaller chips.

Why did the 2024 IPO fail?

Federal regulators launched a review of an investment from G42, the Abu Dhabi-based technology conglomerate, that held up the 2024 filing. Cerebras withdrew rather than wait through the review and refiled in 2026 after expanding its customer base.

How big is the OpenAI deal?

OpenAI has committed to spending more than $20 billion on Cerebras chips according to The Information, with an equity warrant that lets the lab buy Cerebras stock. The structure makes OpenAI both the largest customer and an aligned shareholder.

Is Cerebras actually a threat to Nvidia?

In the specific workload of fast inference on the largest models, Cerebras has won at least one flagship customer and argues it is faster than Nvidia alternatives. Across the broader AI chip market, Nvidia's CUDA ecosystem and customer footprint remain dominant.

Sources

- AI chip startup Cerebras files for IPO - TechCrunch

- AI chipmaker Cerebras files to go public after scrapping IPO plans last year - CNBC

- Cerebras Files for IPO as Demand Surges for More Efficient AI Chips - The Wall Street Journal

- OpenAI to Spend More Than $20 Billion on Cerebras Chips, Receive Equity Stake - The Information

- Cerebras, an A.I. Chip Maker, Files to Go Public - The New York Times