Somewhere in our understanding of the universe, something is wrong. We do not know what. But we now know with greater confidence than ever that the wrongness is real.

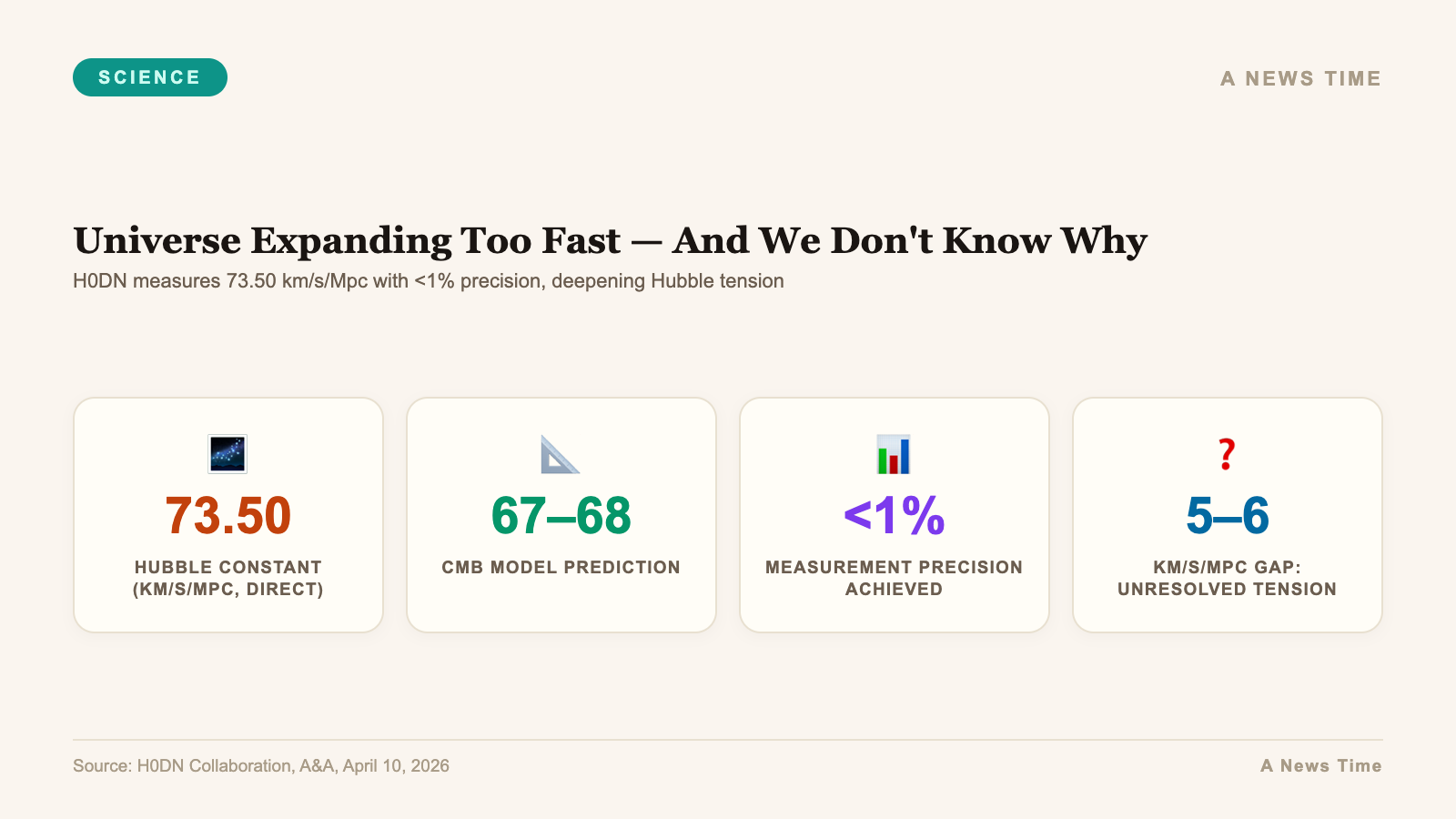

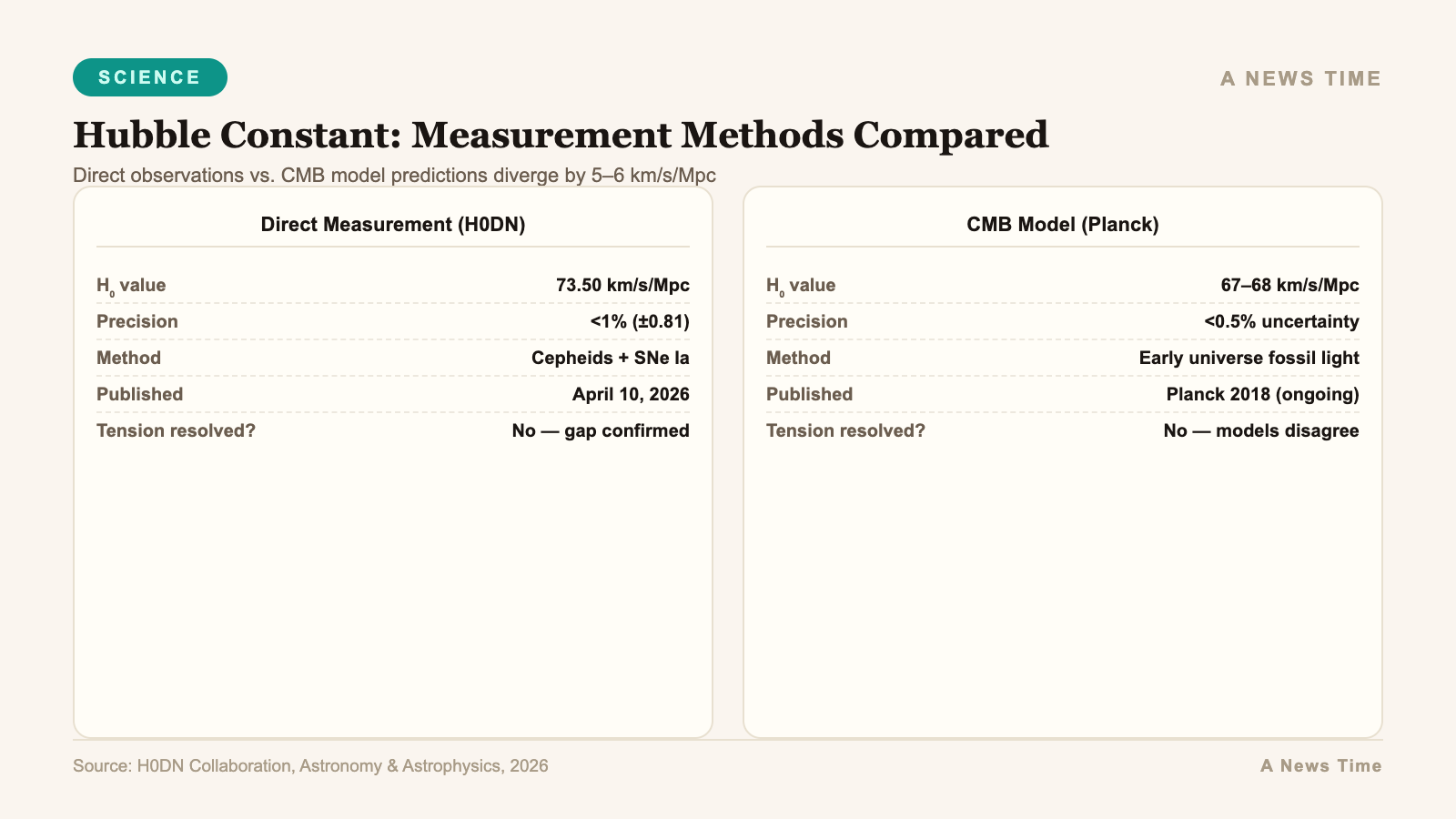

A major international collaboration has published what it describes as a community consensus measurement of the Hubble constant, the number that describes how fast the universe is expanding. Their result, published April 10, 2026 in the journal Astronomy and Astrophysics, places the expansion rate at 73.50 plus or minus 0.81 kilometers per second per megaparsec, achieving a precision slightly better than 1 percent. That sounds like a triumph of precision measurement. In one sense, it is. In another, it has just made one of the most uncomfortable problems in modern physics considerably harder to explain away.

The problem is this: the universe's expansion rate as measured directly from nearby stars and galaxies stubbornly disagrees with the expansion rate predicted by the standard cosmological model, which extrapolates from measurements of the early universe's fossil light, the cosmic microwave background. The direct measurements say approximately 73. The model predictions say approximately 67 or 68. That gap, five or six kilometers per second per megaparsec, is what cosmologists call the Hubble tension. For years, some researchers hoped it was a measurement artifact, some systematic error hiding in the data. The new study, led by the H0 Distance Network (H0DN) Collaboration, has essentially closed that escape route.

What a megaparsec is and why you should care

A megaparsec is 3.26 million light-years. When we say the universe is expanding at 73.5 kilometers per second per megaparsec, we mean that two points in space separated by one megaparsec are moving apart at 73.5 kilometers per second due to cosmic expansion. Two points separated by two megaparsecs are moving apart at 147 kilometers per second. The farther apart two objects are, the faster they appear to be receding from each other, not because they are moving through space, but because the space between them is growing.

Edwin Hubble measured the first rough version of this constant in 1929. His initial estimate was spectacularly wrong, off by a factor of seven from the modern value, but the concept was right: the universe has a measurable expansion rate. The constant was named after him. For much of the 20th century, cosmologists argued about whether the Hubble constant was closer to 50 or to 100, a debate that was not resolved until the Hubble Space Telescope's Key Project finally narrowed it to around 72 in 2001.

Today the precision has increased dramatically. But rather than converging on a single agreed value, different measurement methods have converged on two different values that do not agree with each other. That is the Hubble tension.

The two ladders to cosmic distance

Understanding why this is hard requires understanding that there is no single direct way to measure cosmic distances. Astronomers have built what they call the cosmic distance ladder, a succession of overlapping techniques that each calibrate the next rung up.

The bottom rung is geometry: for nearby stars, you can measure the tiny shift in apparent position as Earth orbits the Sun, a technique called parallax, and calculate the true distance. From there, you calibrate Cepheid variable stars, which pulse with periods directly related to their intrinsic brightness, allowing you to infer distance from apparent brightness. From Cepheids, you calibrate Type Ia supernovae, which explode with a characteristic maximum brightness that makes them visible across billions of light-years. Each step up the ladder introduces potential errors, and each calibration depends on the previous one.

The H0DN Collaboration, whose work grew out of an International Space Science Institute workshop in Bern, Switzerland in March 2025, took a different approach. Rather than building a single chain of calibrations, they constructed what they call a "distance network" that links multiple independent methods together, including Cepheid variables, red giant stars (which reach a known maximum brightness at a predictable point in their evolution), Type Ia supernovae, surface brightness fluctuations in galaxies, and gravitational lens time delays. The data came from ground-based observatories at NSF's Cerro Tololo Inter-American Observatory in Chile and Kitt Peak National Observatory in Arizona, as well as space-based measurements.

The critical test was whether removing any single technique changed the answer. It did not. The network is robust to the exclusion of individual rungs. This is the observation that makes the new result hard to dismiss as a calibration artifact.

73.50 and the wall it hits

The team's final measurement, 73.50 plus or minus 0.81 kilometers per second per megaparsec, sits firmly in the middle of the cluster of recent direct measurements, all of which have consistently returned values in the 73-74 range. The Planck satellite's measurement of the cosmic microwave background, combined with the standard cosmological model (technically called Lambda-CDM, for the cosmological constant and cold dark matter), predicts a Hubble constant of approximately 67.4 plus or minus 0.5 kilometers per second per megaparsec.

The two values are separated by about six standard deviations in statistical terms. To put that in context: a two-standard-deviation discrepancy is considered interesting but not conclusive. Three standard deviations is considered evidence. Five standard deviations is the conventional physics threshold for declaring a discovery. Six standard deviations of disagreement between two independent, rigorously checked results is what cosmologists call a crisis.

The authors are careful in their language, as scientists should be. They write that their result "effectively rules out explanations of the Hubble tension that rely on a single overlooked error in local distance measurements." They go on to note that "if the tension is real, as the growing body of evidence suggests, it may point to new physics beyond the standard cosmological model."

"New physics beyond the standard cosmological model" is the kind of phrase scientists do not use lightly. It means that the framework we use to describe the entire history and evolution of the universe from the Big Bang to the present may be missing something. What that something might be is the subject of genuine scientific debate, multiple competing hypotheses, and no consensus.

What could cause the universe to expand too fast

Several categories of explanation have been proposed. None has achieved consensus.

The first category involves dark energy. The standard model describes dark energy as a cosmological constant, a uniform energy density that is the same everywhere and at all times and that drives the acceleration of cosmic expansion. If dark energy instead evolves over time, varying in its strength as the universe ages, the predictions for today's expansion rate could differ from what a static model calculates. Some recent observations from the Dark Energy Spectroscopic Instrument have hinted at possible time-variation in dark energy, but those hints remain contested.

The second category involves early universe physics. Perhaps there was an unknown burst of exotic physics shortly before the cosmic microwave background was emitted, roughly 380,000 years after the Big Bang, that altered the universe's expansion history in ways not captured by the standard model. This class of solutions goes by names like "early dark energy" or "extra radiation species." They have the advantage of addressing the problem at its root but the disadvantage of requiring new particles or fields not observed in laboratory physics.

The third category involves gravity. General relativity is extraordinarily well-tested on solar system scales. On cosmological scales, it has been tested less precisely. Modified gravity theories, in which the behavior of gravity changes on large scales, could produce a different expansion history without invoking new types of matter or energy. These theories face significant challenges from other observations but cannot yet be definitively ruled out.

The fourth category, and the one that many physicists find least comfortable, is that we are simply missing something fundamental. The universe began in a state we do not fully understand. It evolved through processes some of which we have not yet measured. And it is now expanding at a rate that does not match our best model. The discomfort is not a reason to abandon the model, but it is a reason to remain genuinely uncertain about what lies beyond it.

What the distance network framework unlocks

One underappreciated aspect of the H0DN result is its infrastructure value. By making their methods and data publicly available in a standardized framework, the collaboration has created a platform that future measurements can slot into directly. As new observatories come online, including the Vera C. Rubin Observatory in Chile, the Nancy Grace Roman Space Telescope in orbit, and eventually the Extremely Large Telescope, their data can be incorporated into the network to progressively refine the Hubble constant measurement.

If the Hubble tension survives that scrutiny at its current statistical significance, the pressure on theoretical cosmology will become enormous. If some future measurement shifts the result toward 70, bridging part of the gap, theorists will have more room to propose solutions. Right now, the gap is large enough that any proposed solution must do considerable work.

John Blakeslee of NSF NOIRLab, who serves as Director of Research and Science Services and contributed to the collaboration, put the methodological achievement in context. The distance network is not just a new number, it is a new way of obtaining and cross-checking that number that is designed to be self-correcting as new data arrives. That is a different kind of contribution than any individual measurement could provide.

The universe as a broken instrument

There is something almost philosophically striking about the Hubble tension. The universe is, among other things, the world's oldest and largest physics experiment. It has been running for 13.8 billion years. We have developed extraordinary instruments to read its outputs. Two different classes of instrument, looking at two different epochs of the universe's history, are telling us two different things about its expansion rate. Either one class of instrument is wrong, or the universe itself is more complex than our model describes.

The H0DN result has now made the first possibility significantly less plausible. The instruments looking at the nearby universe are not obviously wrong. Their measurements are precise, cross-checked across multiple independent techniques, and consistent with each other at the level of better than 1 percent. The instruments looking at the early universe are also precise and cross-checked and consistent.

What is not consistent is the universe they are measuring. Or more precisely, the model we use to connect those measurements is the piece that may require revision.

The study "The Local Distance Network: A community consensus report on the measurement of the Hubble constant at approximately 1% precision" was published in Astronomy and Astrophysics, volume 708, article A166. The collaboration includes scientists from institutions spanning North America, Europe, South America, and Asia. If the Hubble tension resolves the way the field expects, some version of this paper will eventually be cited in Nobel Prize lectures. If it doesn't, the revision it precipitates may be just as significant.

What we still don't know

Everything, essentially, that matters most. We do not know whether the Hubble tension reflects new physics or some yet-undiscovered systematic error that has fooled every measurement team independently. We do not know which proposed solution, if any of the current ones, is correct. We do not know whether dark energy is truly a cosmological constant or whether it varies. We do not know whether General Relativity holds on cosmological scales. We do not know what the universe will look like in 100 billion years if its expansion continues to accelerate.

We do know, with roughly 1 percent precision, how fast the space between galaxies is currently growing. What that number is trying to tell us about the deeper nature of the cosmos is the most interesting open question in physics today.

Sources

- ScienceDaily / AURA: The Universe is expanding too fast and scientists still can't explain it (April 12, 2026)

- NSF NOIRLab: H0DN Collaboration press release (April 2026)

- Casertano et al., Astronomy and Astrophysics, 2026 (DOI: 10.1051/0004-6361/202557993)

- ESA: Planck mission and the cosmic microwave background