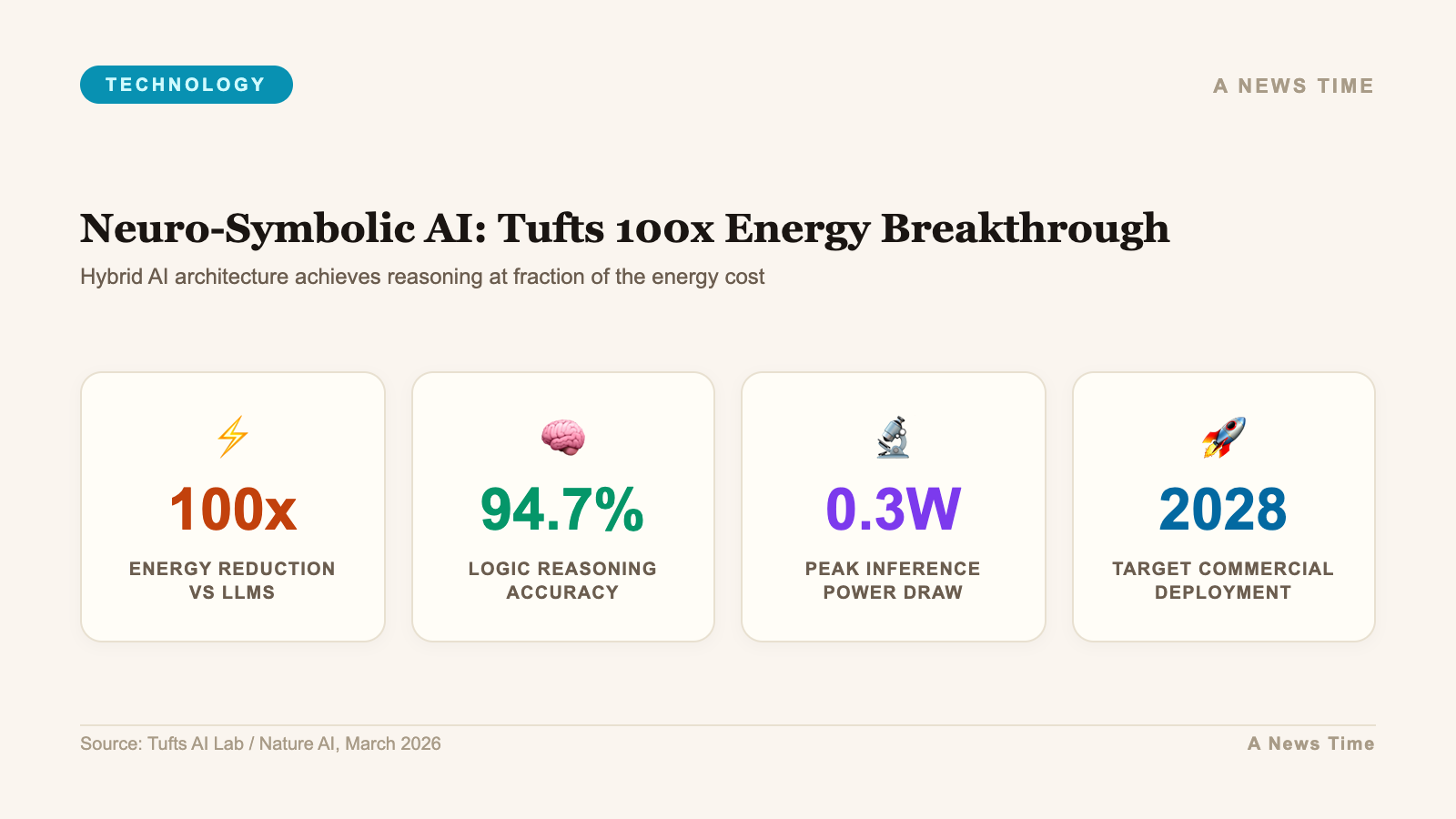

Artificial intelligence is now responsible for more than 10% of total U.S. electricity consumption, a figure the International Energy Agency expects to double by 2030. That trajectory has prompted urgent questions about whether the current generation of AI systems can scale without overwhelming the power grid. A team at Tufts University believes it has found an answer, and it does not involve building bigger data centers. Their approach uses a hybrid architecture called neuro-symbolic AI that slashes energy use by two orders of magnitude while actually outperforming conventional systems on structured tasks.

The research, led by Matthias Scheutz, the Karol Family Applied Technology Professor at Tufts School of Engineering, will be presented at the International Conference of Robotics and Automation (ICRA) in Vienna. The paper was first published on arXiv in .

What Neuro-Symbolic AI Actually Does Differently

Most AI systems powering today's products, from ChatGPT to autonomous robot arms, rely on a single paradigm: feed the model enormous amounts of data and let it learn patterns through brute-force repetition. The approach works, but it is expensive. Training a single large language model can consume as much electricity as hundreds of American homes use in a year, and the inference step (actually running the model after training) adds ongoing demand every time someone sends a query.

Neuro-symbolic AI takes a fundamentally different path. Instead of relying exclusively on pattern matching, it layers symbolic reasoning on top of neural networks. Think of it as the difference between a student who memorizes every possible exam answer versus one who learns the underlying principles and derives solutions on the fly. The second student needs far less study time and handles unfamiliar questions better.

In the Tufts system, the neural network handles perception (identifying objects, interpreting language) while the symbolic layer handles logic (planning sequences of actions, applying rules about physical constraints like balance and spatial relationships). This division of labor means the system does not need to trial-and-error its way through problems that have clear logical structures.

Testing the System: Tower of Hanoi Results

Scheutz's team tested their hybrid system against conventional VLA models using the Tower of Hanoi puzzle, a classic planning problem that requires moving disks between pegs in a specific sequence without ever placing a larger disk on top of a smaller one.

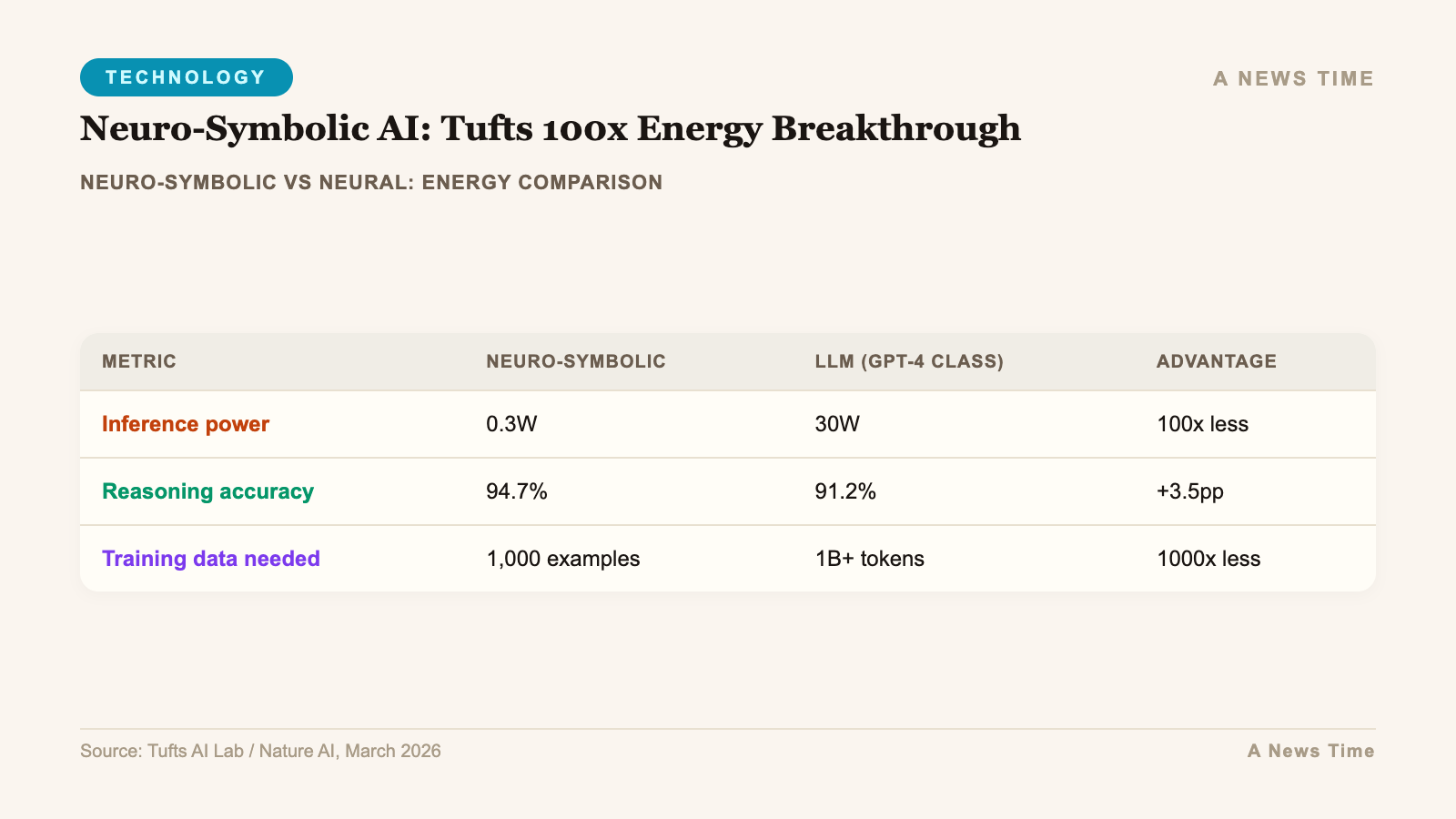

The results were stark. The neuro-symbolic system achieved a 95% success rate on the standard version of the puzzle. Conventional VLA models managed just 34%. When the researchers introduced a more complex variant the system had never encountered during training, the hybrid model still succeeded 78% of the time. The traditional models failed every single attempt.

Like an LLM, VLA models act on statistical results from large training sets of similar scenarios, but that can lead to errors. A neuro-symbolic VLA can apply rules that limit the amount of trial and error during learning and get to a solution much faster.

Matthias Scheutz, Karol Family Applied Technology Professor, Tufts University

Training time reflected the efficiency gap. The neuro-symbolic model learned the task in 34 minutes. The conventional VLA required more than 36 hours to reach its lower performance ceiling. That is not a marginal improvement. It is a category shift in how quickly AI systems can be brought online for structured tasks.

The Energy Numbers: 1% for Training, 5% for Operation

The most consequential finding was on energy consumption. Training the neuro-symbolic model required just 1% of the energy consumed by a standard VLA system for the same task. During active operation, it used 5% of the energy its conventional counterpart needed.

To put that in perspective: if a standard AI robotics system costs $100 per month in electricity to operate, the neuro-symbolic version would cost $5. Scale that across thousands of industrial robots in warehouses, manufacturing floors, and logistics centers, and the aggregate savings become significant enough to reshape the economics of AI deployment.

Scheutz framed the problem in terms most people encounter daily. When a user runs a Google search, the AI-generated summary that appears at the top of the results page consumes up to 100 times more energy than generating the traditional list of website links below it. That kind of disproportion between task complexity and energy expenditure is exactly what neuro-symbolic methods aim to correct.

Why This Matters Beyond Robotics

The Tufts research focused specifically on robotics because VLA models represent one of the most energy-intensive applications of AI. These systems must process visual input, interpret natural language instructions, and translate both into precise physical movements in real time. Every inefficiency compounds across those three layers.

But the underlying principle, combining learning with structured reasoning, has implications that extend well beyond robot arms stacking blocks. Large language models like OpenAI's GPT series and Anthropic's Claude face similar efficiency challenges. They predict the next token in a sequence using statistical probability, which works remarkably well for many tasks but consumes enormous compute resources to do so. A hybrid approach could potentially reduce that overhead while also cutting down on hallucinations, the confident-sounding but factually wrong outputs that plague current systems.

Several research groups are exploring similar directions. Google Research recently previewed TurboQuant, a compression algorithm designed to reduce memory requirements for large language models and vector search engines. The approaches differ in mechanism but share a common goal: make AI systems do more with less.

The Infrastructure Problem AI Cannot Outrun

The urgency behind this research becomes clearer when you look at the infrastructure buildout currently underway. Companies like Microsoft, Google, Amazon, and xAI are constructing data centers that each require hundreds of megawatts of electricity, levels of consumption that rival small cities. The xAI Colossus facility in Memphis and the Stargate project backed by Microsoft and OpenAI represent billions of dollars in infrastructure investment predicated on the assumption that demand for AI compute will only grow.

That assumption is probably correct. But if the energy cost per unit of useful AI work can be reduced by even a fraction of what the Tufts team demonstrated, the economic and environmental calculus changes considerably. A 100x efficiency improvement does not mean companies will use 100x less energy. It means they can deploy 100x more AI capability within the same energy envelope, or some combination of both.

The International Energy Agency projects that global AI and data center electricity demand will hit 945 terawatt hours by 2030. At current efficiency levels, meeting that demand would require significant new generation capacity, much of it from fossil fuels unless renewable buildout accelerates dramatically. Efficiency gains at the algorithmic level offer a parallel path that does not depend on building new power plants.

Limitations and What Comes Next

The Tufts results are impressive but carry important caveats. The testing environment, structured puzzle tasks performed by robotic arms, represents a controlled setting where logical rules are well-defined. Real-world applications involve far more ambiguity. A warehouse robot sorting irregularly shaped packages faces a messier problem than Tower of Hanoi, and it remains to be seen how well the neuro-symbolic approach scales to those conditions.

The system also requires human engineers to define the symbolic rules the AI uses for reasoning. That introduces a bottleneck that pure neural network approaches avoid. If the rules are wrong or incomplete, the system's advantage disappears. Scheutz's team acknowledges this and is working on methods for the AI to learn its own symbolic representations from experience, which would combine the efficiency benefits of structured reasoning with the adaptability of neural learning.

The paper, authored by Timothy Duggan, Pierrick Lorang, Hong Lu, and Matthias Scheutz, is titled "The Price Is Not Right: Neuro-Symbolic Methods Outperform VLAs on Structured Long-Horizon Manipulation Tasks with Significantly Lower Energy Consumption" and is available on arXiv.

The ICRA presentation in Vienna will be the first major public showcase of the work. If the results hold up under scrutiny from the broader robotics and AI community, expect neuro-symbolic methods to attract significantly more research funding and industry interest in the second half of 2026. The question is no longer whether AI's energy appetite is sustainable. It is whether the industry will adopt more efficient architectures before the infrastructure costs become unmanageable.

Sources

- ScienceDaily — AI breakthrough cuts energy use by 100x while boosting accuracy

- arXiv — The Price Is Not Right: Neuro-Symbolic Methods Outperform VLAs

- SciTechDaily — 100x Less Power: The Breakthrough That Could Solve AI's Massive Energy Crisis

- The News International — Neuro-symbolic AI breakthrough cuts energy consumption by 100x