Thoughtworks, the global technology consultancy, released volume 34 of its Technology Radar on , and the central message runs counter to the prevailing narrative in software engineering. While the industry celebrates the speed at which AI agents can now generate code, the report argues that this acceleration is creating a new category of risk: cognitive debt, the growing gap between the volume of code AI produces and what human developers actually comprehend about their own systems.

What Cognitive Debt Actually Means for Software Teams

Technical debt is a familiar concept in software engineering: shortcuts taken today that create maintenance burdens tomorrow. Cognitive debt is different and, in Thoughtworks' framing, potentially more dangerous. It describes the situation where AI generates code faster than humans can understand it, leaving organizations with systems that work but that nobody fully comprehends.

The risk is not that AI-generated code is bad. Much of it is functional, passes tests, and ships to production without incident. The problem emerges when something goes wrong, when a bug surfaces, a security vulnerability is exploited, or a system needs to be modified for new requirements. If the humans responsible for the codebase cannot reason about the code because they did not write it and have not deeply read it, the time to diagnose and fix issues increases dramatically.

Adding to this, the report identifies semantic diffusion as a compounding factor: as new AI technologies proliferate, the terms used to describe them are adopted by different communities with subtly different meanings. "Agent," "autonomous," "agentic," and "self-correcting" all mean different things depending on who is using them, making it harder for teams to communicate clearly about what their systems actually do.

"The capabilities of AI have been increasing at a staggering rate over the last year. However, rather than displacing humans, we've seen in recent months that there's a significant need for humans to proactively implement appropriate practices and technical harnesses to ensure these capabilities are leveraged effectively and securely. The inflection point we're at isn't so much about technology. It's about technique."Rachel Laycock, Chief Technology Officer, Thoughtworks

Four Themes Shaping the Engineering Landscape

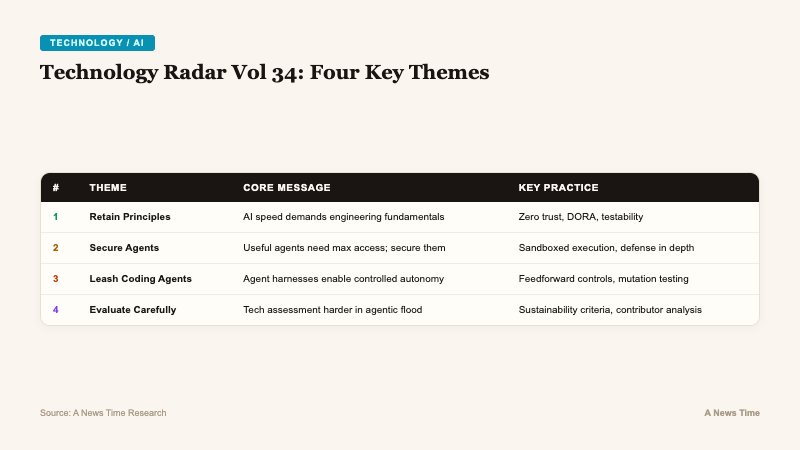

The Radar organizes its findings into four macro themes, each addressing a different dimension of the cognitive debt problem:

| Theme | Core Message | Key Technologies |

|---|---|---|

| Retaining Principles, Relinquishing Patterns | AI speed demands a return to engineering fundamentals | Zero trust architecture, DORA metrics, testability |

| Securing Permission-Hungry Agents | Useful agents need maximum access; security must match | Sandboxed execution, defense in depth |

| Putting Coding Agents on a Leash | Agent harnesses enable controlled autonomy | Feedforward controls, mutation testing |

| Evaluating Technology in an Agentic World | Assessment is harder when projects proliferate and terms blur | Sustainability criteria, contributor analysis |

Theme 1: Engineering Fundamentals Are Not Optional

The first theme argues that AI's speed is not a reason to abandon established engineering practices but a reason to double down on them. When code is generated faster, the traditional techniques that ensure quality, security, and maintainability become more critical, not less.

The report highlights three specific practices as essential in an AI-accelerated environment:

- Zero trust architecture: The assumption that no component of a system should be implicitly trusted, regardless of whether it sits inside or outside a network perimeter. In an AI context, this means treating AI-generated code with the same skepticism as code from any untrusted source.

- DORA metrics: The four key metrics (deployment frequency, lead time, change failure rate, mean time to recovery) that measure software delivery performance. Thoughtworks argues these become more important when AI increases the volume of changes flowing through a pipeline.

- Testability: Designing systems so they can be effectively tested, a principle that AI-generated code often violates because the models optimize for functionality over observability.

The underlying logic is a paradox that Thoughtworks makes explicit: the faster AI generates code, the more disciplined the surrounding engineering practices need to be. Teams that relax testing, code review, and architectural standards because "the AI handles it" are accumulating cognitive debt at an accelerating rate.

Theme 2: AI Agents Want All Your Data

The second theme addresses what may be the most pressing security challenge in the agentic AI era. The most useful AI agents are, by design, the ones that seek maximum access to private data and external systems. An agent that can read emails, access databases, call external APIs, and modify files is far more capable than one confined to a sandbox. But that capability comes with proportional risk.

Thoughtworks frames this as a non-negotiable requirement: sandboxed execution and defense in depth are now essential, not optional security enhancements. Every permission granted to an AI agent represents a potential attack vector, and the report argues that organizations are systematically underestimating this risk in their rush to deploy agentic systems.

The recent wave of AI-related security incidents adds urgency to this theme. As agents become more autonomous and handle more sensitive operations, the blast radius of a compromised agent grows correspondingly. A coding agent with write access to a production repository is a fundamentally different security proposition than a chatbot that answers customer questions.

Theme 3: Coding Agent Harnesses Enable Controlled Autonomy

The third theme introduces a concept that may become central to how organizations manage AI development tools: the coding agent harness. Rather than letting AI agents operate freely and reviewing their output after the fact, teams are building control frameworks that constrain and guide agent behavior in real time.

Two specific techniques are highlighted:

- Feedforward controls: Rules and constraints that shape the agent's behavior before it generates output, analogous to guardrails on a highway that prevent a vehicle from leaving the road rather than trying to course-correct after a crash.

- Mutation testing: A technique where the harness deliberately introduces small changes to AI-generated code and checks whether the test suite catches them. If the tests do not detect the mutations, it signals that the test coverage is insufficient, triggering the agent to generate better tests or flag the code for human review.

The recent funding surge for AI code verification startups like Qodo reflects industry recognition that this problem needs dedicated tooling. The Thoughtworks report validates the direction but emphasizes that harnesses are still being iterated on: no one has a complete solution yet, and the best approaches are emerging through experimentation rather than established best practices.

Theme 4: How Do You Evaluate Technology When Everything Moves Faster?

The fourth theme is perhaps the most subtle but addresses a real operational challenge. The market is being flooded with new tools, libraries, and frameworks at a pace that makes evaluation itself a bottleneck. Many of these are single-contributor projects that may not be maintained long-term. New terms for emerging practices create confusion about what tools actually do versus what they claim.

Thoughtworks argues that assessing technology sustainability is becoming more difficult precisely when it matters most. When an organization adopts a tool that is abandoned six months later, the cost is not just the migration effort but the cognitive debt accumulated from building systems around patterns that are no longer supported.

The report recommends that engineering teams develop more rigorous evaluation criteria that go beyond functionality to assess contributor diversity, maintenance cadence, community health, and alignment with established standards. In an era where AI can generate a convincing-looking open source project in an afternoon, the traditional signals of project quality (GitHub stars, recent commits) are less reliable indicators of long-term viability.

The Paradox at the Heart of the Radar

Volume 34's most compelling insight is the paradox it names explicitly: the faster AI makes software development, the more essential traditional engineering discipline becomes. This runs counter to the narrative that AI will simplify software engineering by handling the "boring" parts. Thoughtworks argues the opposite is happening. AI is handling the easy parts (writing code) while making the hard parts (understanding systems, maintaining quality, ensuring security) more difficult.

For organizations that have adopted agentic AI development tools, the report serves as both a validation and a warning. The tools work. They generate code quickly. They will continue to improve. But the humans in the loop are not optional components that can be gradually removed. They are, as Laycock put it, the practitioners whose "technique" determines whether AI acceleration produces better software or just more software that nobody understands.

Thoughtworks, with 10,000 employees across 47 offices in 18 countries and over 30 years in the technology consulting business, has a vested interest in the continued relevance of human engineering expertise. That bias is worth noting. But the specific mechanisms the report identifies (cognitive debt, semantic diffusion, permission-hungry agents, the evaluation bottleneck) are real and observable across the industry. The Technology Radar's value has always been in naming patterns that practitioners recognize but have not yet articulated. Volume 34 does that effectively for the challenges of building software in an era when AI writes most of it.